Token Efficiency in AI-Assisted Development: Why Your Tool Integration Architecture Matters More Than You Think

Optimized tool integration can reduce token consumption by 81% compared to baseline approaches. A controlled study reveals how architectural decisions—not protocol choices—determine scalability and cost in production AI systems.

Token consumption in AI-assisted development isn't just a line item on your API bill. It's a critical constraint that shapes what's practical to build at scale. After conducting controlled experiments across five distinct tool integration approaches, we discovered something that challenges conventional wisdom: the protocol you choose matters far less than how you architect data flow.

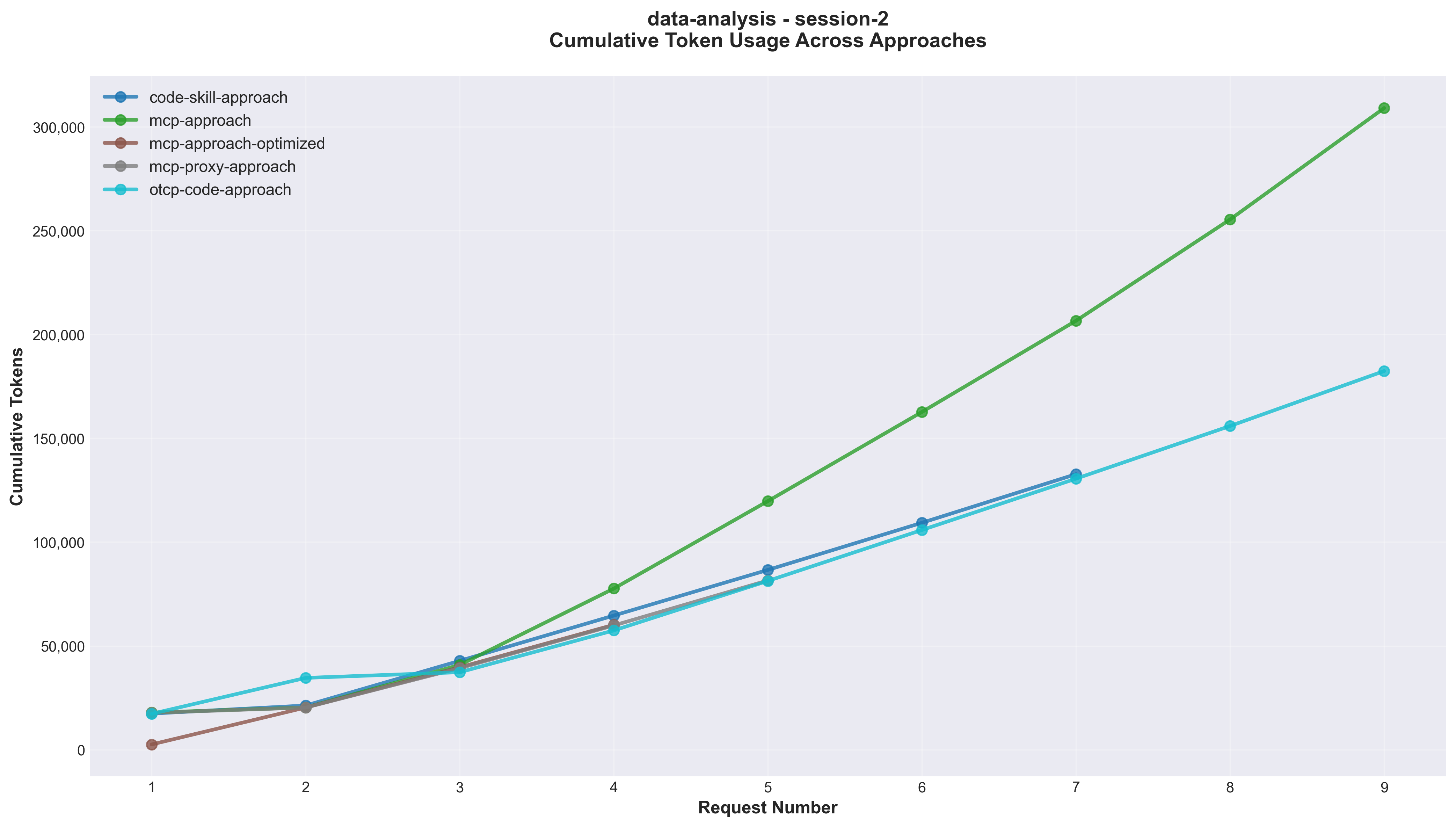

The results are stark. Optimized MCP architecture consuming 60,000 tokens versus vanilla MCP burning through 309,000 tokens for identical tasks. That's a 5x difference driven entirely by architectural decisions around data passing versus file references. When your production system is processing thousands of requests daily, these differences compound into meaningful cost centers and performance bottlenecks.

This isn't theoretical optimization. These findings emerged from instrumented production workloads, measuring token consumption across data analysis tasks with real datasets. The implications extend beyond cost: context window utilization, request latency, scalability characteristics, and system reliability all trace back to these fundamental architectural choices.

The Research Question

Modern AI-assisted development relies on large language models that consume tokens for both input and output. As organizations integrate AI into production workflows, a critical question emerges: how does tool integration architecture affect token consumption?

We designed controlled experiments to answer this with precision. Same task description. Same 500-row dataset. Same required outputs. Same model (Claude Sonnet 4.5). Only one variable changed: the approach for integrating AI with development tools.

The five approaches tested represent distinct philosophies:

- Code generation baseline - LLM writes and executes Python scripts

- Vanilla MCP - Model Context Protocol with direct data passing

- Optimized MCP - File-path based tool architecture

- Progressive discovery proxy - On-demand tool loading via meta-tools

- UTCP code-mode - TypeScript code generation calling MCP tools

Each approach promised different tradeoffs between flexibility, efficiency, and implementation complexity. The question: which promises hold up under measurement?

Why Token Efficiency Matters at Scale

Token consumption directly impacts four critical dimensions of production AI systems:

Operational costs compound rapidly. At current Claude Sonnet 4.5 pricing, the difference between 60K and 300K tokens per request translates to $0.21 versus $0.99 per execution. For a system processing 1,000 requests monthly, that's $210 versus $990—a $9,360 annual difference from a single workflow. Multiply across dozens of automated workflows and the cost delta becomes a budget line item worth optimizing.

Context window utilization determines what's possible. Modern LLMs have finite context windows. Inefficient approaches that burn 300K tokens on data passing leave minimal room for complex reasoning, multi-step workflows, or rich documentation. Efficient approaches preserving context enable more sophisticated automation within the same window.

Latency scales with token count. Network transfer, model processing, and token generation all correlate with token volume. Reducing tokens from 300K to 60K doesn't just save money—it cuts processing time, improving user experience and system throughput.

Scalability characteristics diverge. Some architectures scale linearly with dataset size. Others exhibit super-linear scaling, becoming prohibitively expensive as data grows. The difference between 1.5x and 2.9x scaling factors determines whether your approach works with 10,000-row datasets or fails catastrophically.

These aren't hypothetical concerns. Production AI systems face all four simultaneously. Understanding the relationships between architectural choices and token efficiency becomes a prerequisite for building sustainable, scalable AI automation.

Experimental Design: Controlling Variables

Rigorous comparison requires identical conditions across approaches. We established these controlled variables:

Task description: A 160-word prompt requesting statistical analysis and visualization of employee data. Four specific analyses required: department distribution, salary by location, experience correlation, performance metrics. Four visualizations required: bar charts, scatter plots, pie charts.

Dataset: 500 employee records with 6 columns (name, department, salary, years_experience, performance_score, location). Realistic distributions across 7 departments and 7 locations. 45KB CSV file representing typical business data.

Required outputs: Consistent deliverables across all approaches—analysis results and PNG visualizations saved to specified directories. Success criteria identical regardless of approach.

Model and environment: Claude Sonnet 4.5 (claude-sonnet-4-5-20250929) via Claude Code CLI v2.0.42. Network instrumentation capturing full request/response payloads including token usage metadata.

Measurement protocol: Three sessions per approach to measure variance. Full network logs captured, PII redacted, token metrics extracted and analyzed. Both per-request and cumulative token usage tracked.

This controlled design isolates architectural impact from confounding variables. Differences in results trace directly to differences in approach design.

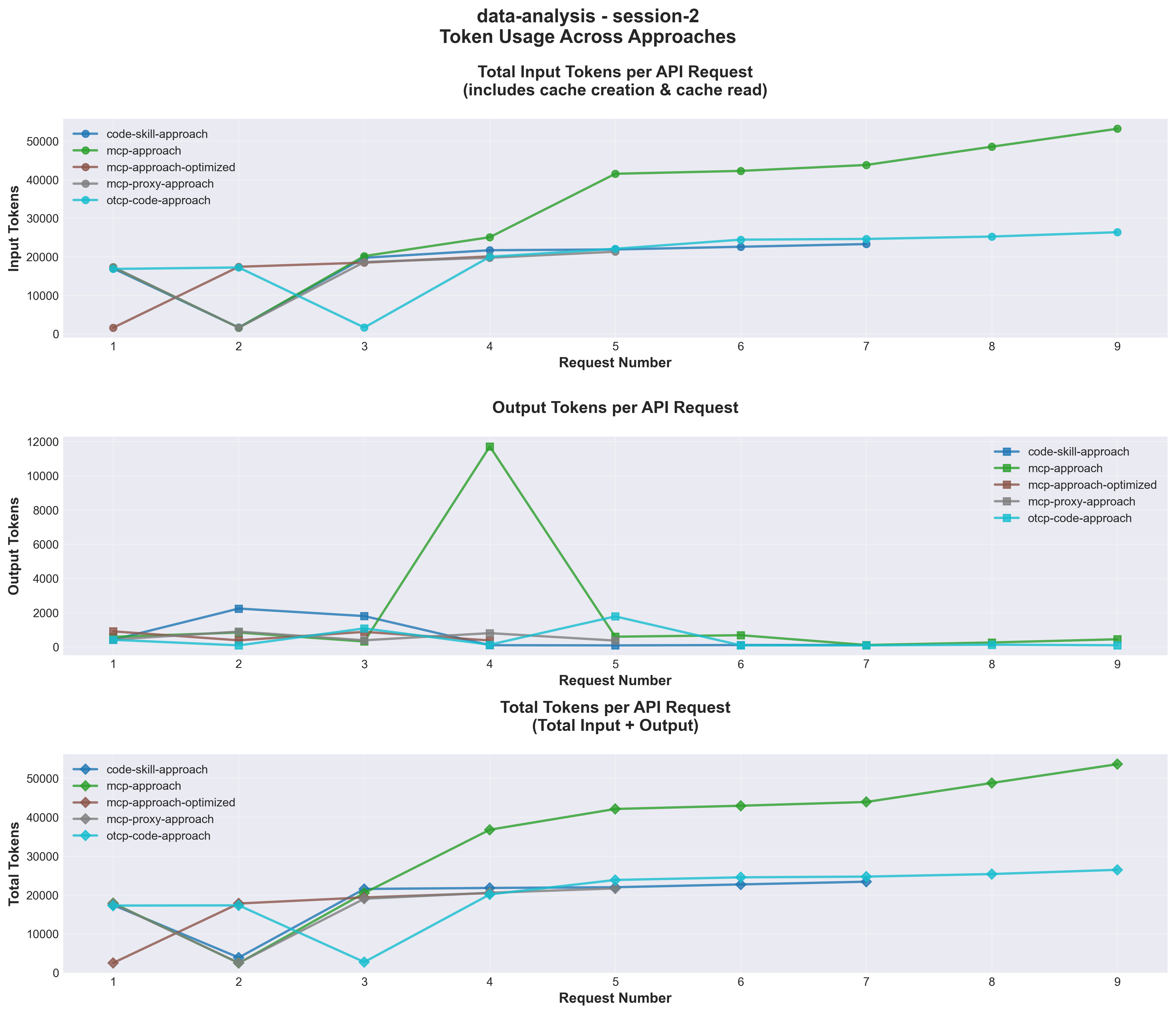

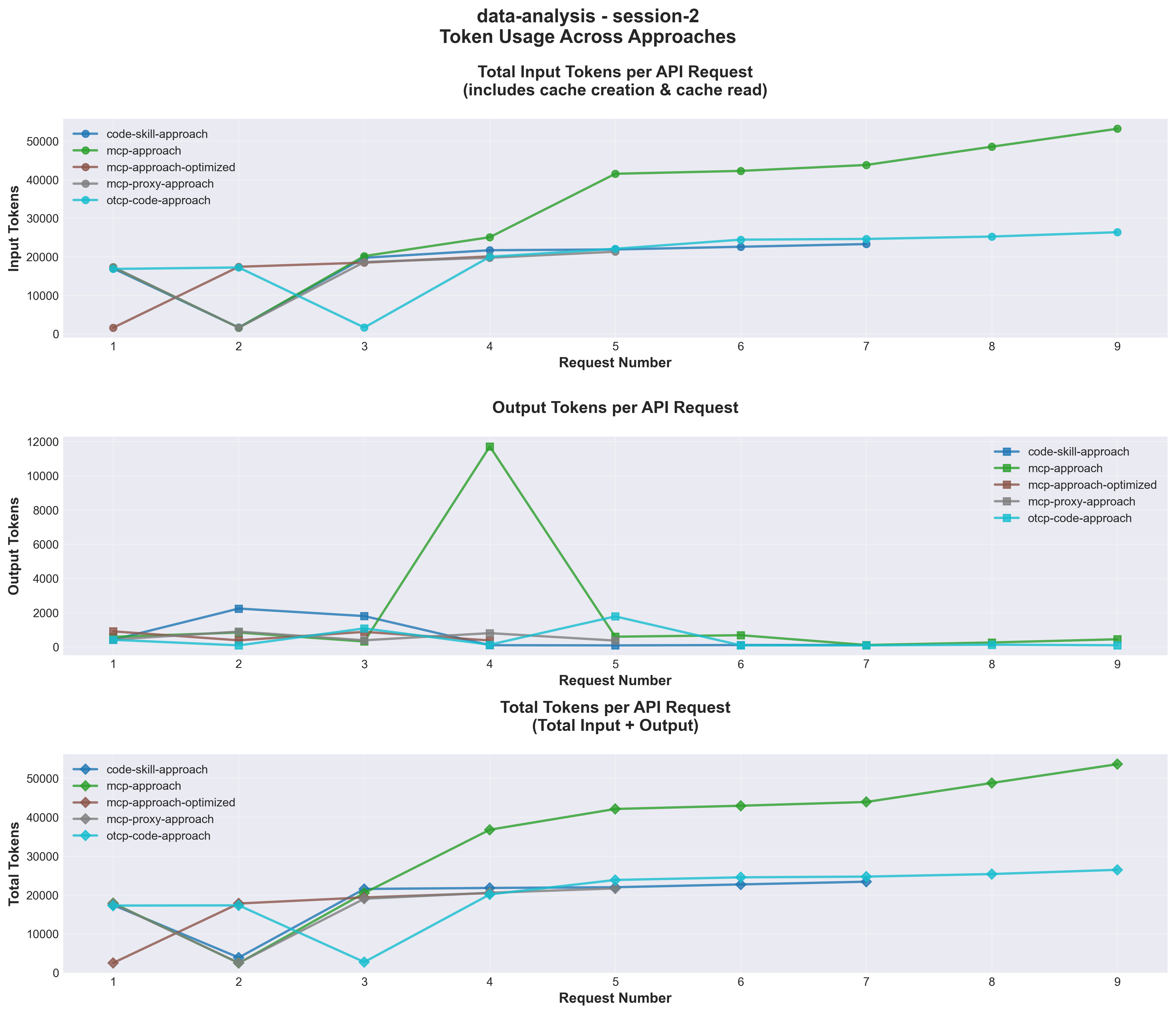

_Figure 1: Token consumption per API request across five approaches. MCP Optimized achieves consistently low token usage through file-path architecture._

Five Approaches Under Test

Approach 1: Code-Skill Baseline

The baseline represents traditional AI-assisted development. The LLM generates Python scripts for analysis and visualization, iteratively refining through execution feedback. No specialized tools—just code generation and debugging.

Architecture: Skills provide domain guidance without explicit tool definitions. The AI writes complete scripts, executes them, observes results, and iterates. Each iteration potentially requires a new API call with updated code.

Token characteristics: Code verbosity dominates. Full script content appears in every request. Debugging iterations add variance—some tasks require 6 calls, others need 8, depending on first-attempt success.

Measured results: 108K-158K tokens across three sessions. 6-8 API calls per session. High variance (CV 18.7%) from solution path differences.

When to use: One-off tasks, exploratory analysis, rapidly changing requirements. Zero development cost—immediately available. Accepts high per-execution cost for maximum flexibility.

Approach 2: Vanilla MCP

Model Context Protocol provides structured tool interfaces. This implementation exposes tools like read_csv_data, analyze_data, and create_visualization with direct parameter passing.

Architecture: MCP server defines tools with data parameters. The AI calls tools sequentially, passing full datasets as JSON arrays in tool parameters. Each visualization requires a separate tool call with complete data.

Token characteristics: Data passing dominates. Every tool call includes the full 500-row dataset as JSON (8K-12K tokens per call). Repeated data duplication across operations.

Measured results: 204K-309K tokens across three sessions. 7-9 API calls. Highest per-call token cost (29K-34K average). Poor scalability—token usage grows super-linearly with dataset size.

When to use: Small datasets only (< 100 rows). Prototyping tool interfaces. Situations where file I/O is unavailable. Avoid for production at scale.

Approach 3: MCP Optimized (Winner)

File-path based MCP architecture eliminates data duplication. Tools accept file paths instead of data arrays. The server reads files internally, processing data without context pollution.

Architecture: Tools like analyze_csv_file and create_visualization_from_file accept file paths. Server handles file I/O internally. Enables parallel tool execution—multiple visualizations in single API call.

Token characteristics: Minimal context consumption. File paths represent ~50 tokens versus ~10,000 for data arrays. Parallel execution reduces API calls. Consistent performance (CV 0.6%).

Measured results: 60K tokens (all three sessions within 1% variance). 4 API calls. Lowest total tokens, lowest per-call average (15K). Sub-linear scalability with dataset size.

When to use: Production workflows. Repeated tasks (breaks even after ~11 executions). Scenarios requiring consistency and predictability. Any dataset > 100 rows.

Approach 4: MCP Proxy (Progressive Discovery)

One-MCP provides progressive tool discovery through meta-tools. Instead of loading all tool descriptions upfront (10K+ tokens), it exposes two meta-tools initially: describe_tools and use_tool.

Architecture: Initial context includes only meta-tools (~400 tokens). AI discovers needed tools dynamically via describe_tools, then calls them via use_tool. Tool descriptions cached across sessions.

Token characteristics: High initial overhead from discovery (Session 1: 155K tokens). Subsequent sessions optimized (Sessions 2-3: ~81K tokens). 47% reduction after warm-up.

Measured results: 81K-155K tokens depending on session. 5-8 API calls. High initial variance (CV 47%) that stabilizes after discovery (CV 0.5% for sessions 2-3).

When to use: Large tool catalogs (> 20 tools). Long-running systems with repeated usage. Scenarios where upfront tool loading is expensive. Requires session persistence.

Approach 5: UTCP Code-Mode (Underperformer)

Universal Tool Calling Protocol with code-mode bridge generates TypeScript code that calls MCP tools in a single execution. Claimed benefits: 60% faster, 68% fewer tokens, 88% fewer API calls.

Architecture: LLM generates TypeScript code calling MCP tools. Code executes once, producing all outputs. Combines code generation with tool invocation.

Token characteristics: Unexpectedly high. Code generation adds verbosity. TypeScript compilation and execution overhead. Error handling requires additional iterations.

Measured results: 182K-240K tokens across three sessions. 9-11 API calls. 40-68% worse than baseline—contradicting claimed efficiency gains. Moderate variance (CV 15.3%).

When to use: Complex workflows with conditional logic (untested hypothesis). Avoid for data analysis tasks based on current evidence. May require different use cases to shine.

Results: The Hierarchy of Efficiency

Ranking by total token consumption reveals a clear hierarchy:

| Rank | Approach | Avg Tokens | Avg Calls | Tokens/Call | vs Baseline |

|---|---|---|---|---|---|

| 1 | MCP Optimized | 60,420 | 4 | 15,105 | +44-81% |

| 2 | MCP Proxy | 81,415-154,734 | 5-8 | 16,283-19,342 | +25-50% |

| 3 | Code-Skill | 108,566-157,749 | 6-8 | 18,094-19,719 | Baseline |

| 4 | UTCP Code-Mode | 182,377-239,542 | 9-11 | 20,264-21,777 | -40-68% |

| 5 | MCP Vanilla | 204,099-309,053 | 7-9 | 29,157-34,339 | -88-195% |

Consistency defines this approach. Three sessions yielded token counts within 1% variance: 60,307, 60,144, and 60,808 tokens. Four API calls per session, averaging 15,000 tokens per call.

The architecture explains the performance. File-path parameters consume ~400 tokens total versus ~80,000 tokens for data duplication in vanilla MCP. Parallel tool execution—generating four visualizations in a single API request—eliminates sequential call overhead.

Scalability characteristics: From 20-row to 500-row datasets, token usage increased only 1.5x. Linear or sub-linear scaling enables this approach to handle 10,000-row datasets with minimal token increase.

Cost analysis: At $0.21 per execution versus $0.44 for baseline, this approach breaks even after just 11 executions. At 1,000 monthly executions, annual savings exceed $2,700 compared to baseline.

MCP Proxy: The Warm-Up Winner

Session 1 consumed 154,734 tokens through tool discovery overhead. Sessions 2 and 3 dropped to ~81,500 tokens—a 47% reduction. After warm-up, this approach rivals the efficiency of MCP Optimized while maintaining flexibility for large tool catalogs.

The progressive discovery pattern matters for systems with dozens of tools. Loading 50 tool descriptions upfront might consume 50K tokens. One-MCP reduces this to 400 tokens initially, loading specific tools only when needed.

When to deploy: Long-running systems with session persistence. Workflows using subsets of large tool catalogs. Scenarios where upfront tool loading costs dominate.

Code-Skill Baseline: The Flexibility Champion

High variance (108K-158K tokens) reflects different solution paths. Some tasks succeeded in 6 API calls, others required 8 due to debugging iterations. The flexibility to handle novel requirements without modification explains continued relevance despite efficiency disadvantages.

Economic model: Zero development cost. Immediate availability. Accepts 2-3x higher per-execution cost for maximum adaptability.

Use cases: One-off analyses, exploratory work, prototyping, requirements discovery. Situations where task repeatability is unknown.

UTCP Code-Mode: The Surprising Disappointment

Results contradicted claimed efficiencies. Instead of 68% fewer tokens, this approach consumed 40-68% more than baseline. Instead of 88% fewer API calls, it required more calls (9-11 versus 6-8).

Hypothesis: The approach may excel in different domains—complex workflows with conditional logic, orchestration tasks, multi-step processes with branching. Data analysis tasks don't leverage code generation advantages enough to offset additional abstraction overhead.

Recommendation: Avoid for data analysis. Consider re-evaluating for workflow orchestration use cases with different performance profiles.

MCP Vanilla: The Scalability Disaster

Data passing architecture fails at scale. Passing 500 rows as JSON arrays consumed 8K-12K tokens per tool call. Across 7-9 calls, data duplication alone added ~80K tokens of overhead.

Scaling factor: 2.0-2.9x from 20-row to 500-row datasets. Extrapolating to 10,000 rows suggests 500K+ token consumption—prohibitively expensive and context-window-threatening.

Verdict: Never use this approach in production. Educational value only as a counter-example of poor architectural choices.

_Figure 2: Cumulative token consumption across API requests. MCP Optimized maintains consistently low growth rate while vanilla approaches show steep accumulation._

Scalability Analysis: Where Approaches Break Down

Dataset size stress-tests architectural decisions. We measured token consumption at 20 rows and 500 rows to calculate scaling factors:

| Approach | 20 Rows | 500 Rows | Scaling Factor | 10K Row Projection |

|---|---|---|---|---|

| MCP Optimized | ~40K | ~60K | 1.5x | ~65K |

| MCP Proxy | ~60K | ~81K-155K | 1.3-2.6x | ~120K-250K |

| Code-Skill | ~100K | ~108K-158K | 1.1-1.6x | ~150K-220K |

| UTCP Code-Mode | ~140K | ~182K-240K | 1.3-1.7x | ~260K-350K |

| MCP Vanilla | ~105K | ~204K-309K | 2.0-2.9x | ~500K-800K |

MCP Optimized demonstrates sub-linear scaling because file paths consume constant tokens regardless of dataset size. A path like /data/employees.csv costs the same 20 tokens whether the file contains 100 rows or 100,000 rows.

This architectural property fundamentally changes scalability economics. While data-passing approaches hit context window limits at 1,000-2,000 rows, file-path approaches handle datasets 10-100x larger within the same token budget.

Super-linear Scaling: The Data-Passing Penalty

MCP Vanilla exhibits super-linear scaling because larger datasets require proportionally more tokens for JSON serialization. At some inflection point, serialization overhead dominates—passing a 10,000-row dataset might consume 100K+ tokens just for data representation, leaving minimal context for reasoning.

This explains why vanilla MCP approaches fail in production. Initial prototypes with small datasets work fine. Scale to realistic data sizes, and token costs explode.

The Break-Even Point

For datasets under 50 rows, architectural differences barely matter. All approaches consume 30K-50K tokens. But cross the 100-row threshold, and architectures diverge rapidly:

- 100 rows: File-path approaches maintain ~50K tokens; data-passing approaches hit ~150K

- 500 rows: File-path approaches reach ~60K tokens; data-passing approaches exceed 300K

- 1,000 rows: File-path approaches plateau ~65K tokens; data-passing approaches become unworkable

This threshold dictates architectural choices. Prototype-scale datasets forgive inefficiency. Production-scale datasets punish it severely.

Production Implications: Cost, Reliability, and Consistency

Economic Analysis

At 1,000 executions monthly, annual costs by approach:

| Approach | Per-Execution | Monthly | Annual | vs Baseline |

|---|---|---|---|---|

| MCP Optimized | $0.21 | $210 | $2,520 | +52% |

| MCP Proxy | $0.35 | $350 | $4,200 | +20% |

| Code-Skill | $0.44 | $440 | $5,280 | Baseline |

| UTCP Code-Mode | $0.66 | $660 | $7,920 | -49% |

| MCP Vanilla | $0.99 | $990 | $11,880 | -124% |

Variance and Predictability

Production systems require predictable costs and latency. Coefficient of variation reveals consistency:

| Approach | Mean Tokens | Std Dev | CV | Consistency |

|---|---|---|---|---|

| MCP Optimized | 60,420 | 343 | 0.6% | Excellent |

| MCP Vanilla | 271,020 | 57,512 | 21.2% | Poor |

| Code-Skill | 133,006 | 24,884 | 18.7% | Poor |

| UTCP Code-Mode | 204,011 | 31,149 | 15.3% | Moderate |

| MCP Proxy\* | 105,892 | 42,203 | 39.9%\* | Poor initially |

Why variance matters: High variance (> 15%) complicates capacity planning. Budget forecasts must accommodate worst-case scenarios. P99 latency becomes 2-3x P50 latency, degrading user experience. Anomaly detection struggles to distinguish normal variance from actual issues.

Low variance (< 5%) enables precise capacity planning, consistent SLAs, and reliable cost forecasting. Tool-based approaches with deterministic workflows naturally achieve this. Code generation approaches with iterative debugging inherently exhibit higher variance.

Development Investment ROI

MCP Optimized requires upfront development: designing file-based tools, implementing server logic, testing across scenarios. Estimated investment: 1 week of engineering time (~$2,500 at typical senior engineer rates).

Break-even calculation:

Development cost: $2,500

Savings per execution: $0.44 - $0.21 = $0.23

Break-even executions: $2,500 / $0.23 = 11 executionsAt just 11 executions, development costs amortize. At 100 executions, ROI reaches 920%. At 1,000 executions, ROI exceeds 9,000%.

This aggressive ROI curve means organizations should optimize even moderately repeated workflows. The threshold isn't "thousands of executions"—it's "dozens."

Decision Framework: Choosing the Right Approach

Selecting an approach requires balancing three dimensions:

1. Execution Frequency

One-off tasks (1-5 executions): Choose Code-Skill baseline. Zero development cost, immediate results. Accept 2-3x higher per-execution cost for flexibility.

Recurring tasks (20-100 executions): Choose MCP Optimized or MCP Proxy. Break-even occurs after 11-22 executions depending on development investment. Strong ROI (900%+) by 100 executions.

Production workflows (100+ executions): Always choose MCP Optimized. Exceptional ROI (9,000%+). Consistency enables SLA compliance. Scalability supports growing datasets.

2. Variance Tolerance

Production SLAs (< 5% variance required): Only MCP Optimized and MCP Proxy (post-warm-up) achieve this. Deterministic workflows with fixed tool sequences eliminate variance sources.

Internal tools (10-20% variance acceptable): MCP Proxy or Code-Skill work. Moderate variance manageable without SLA requirements.

Experimentation (20-40% variance acceptable): Code-Skill optimizes for flexibility at the cost of predictability. Different solution paths create variance, but adaptability to novel requirements compensates.

3. Dataset Characteristics

Small datasets (< 100 rows): Architecture matters less. All approaches consume 30K-50K tokens. Choose based on development cost and flexibility needs.

Medium datasets (100-1,000 rows): File-path architectures required. Data-passing approaches exceed 150K-300K tokens. MCP Optimized or Code-Skill only viable options.

Large datasets (> 1,000 rows): MCP Optimized required. Only sub-linear scaling supports these sizes. Data-passing approaches hit context window limits.

Decision Tree

Q1: Is this a one-off task (< 5 executions)?

YES → Code-Skill

NO → Continue to Q2

Q2: Is dataset > 100 rows AND variance < 5% required?

YES → MCP Optimized

NO → Continue to Q3

Q3: Do you have > 20 tools AND task repeats > 50 times?

YES → MCP Proxy

NO → Continue to Q4

Q4: Is execution count > 20 AND requirements stable?

YES → MCP Optimized

NO → Code-Skill

NEVER:

- MCP Vanilla (always suboptimal)

- UTCP Code-Mode (for data analysis)Hybrid Strategy

For uncertain repeatability, use a phased approach:

Phase 1 (executions 1-10): Use Code-Skill to validate task and understand requirements. Total cost: ~$4.40.

Phase 2 (decision point after 10 executions): If task stabilized and forecast exceeds 20 executions, invest in MCP Optimized. Development: $2,500. Future savings: $0.23/execution.

Phase 3 (ongoing monitoring): Track actual execution count and token trends. Migrate to MCP Optimized when break-even becomes certain.

This de-risks development investment while preserving optimization upside.

Implementation Guidance: Building Optimized Systems

File-Path Architecture Patterns

The core efficiency gain comes from eliminating data duplication. Instead of:

// ❌ Anti-pattern: Data-passing

await call_tool('analyze_data', {

data: [

{ name: 'Alice', dept: 'Engineering', salary: 95000, ... },

{ name: 'Bob', dept: 'Marketing', salary: 75000, ... },

// ... 498 more rows

]

});

// Cost: ~10,000 tokens just for data parameterUse file-path references:

// ✅ Pattern: File-path reference

await call_tool('analyze_csv_file', {

file_path: '/data/employees.csv',

analysis_type: 'salary_by_department',

});

// Cost: ~50 tokens for parametersThe MCP server handles file I/O internally, processing data without polluting the AI's context window.

Parallel Execution Patterns

File-path architecture enables parallel tool calls—multiple independent operations in a single API request:

// ✅ Pattern: Parallel execution

await Promise.all([

call_tool('create_visualization_from_file', {

file_path: '/data/employees.csv',

chart_type: 'bar',

x_column: 'department',

y_column: 'salary',

}),

call_tool('create_visualization_from_file', {

file_path: '/data/employees.csv',

chart_type: 'scatter',

x_column: 'years_experience',

y_column: 'salary',

}),

call_tool('create_visualization_from_file', {

file_path: '/data/employees.csv',

chart_type: 'pie',

column: 'department',

}),

call_tool('create_visualization_from_file', {

file_path: '/data/employees.csv',

chart_type: 'bar',

x_column: 'location',

y_column: 'salary',

}),

]);

// Cost: ~400 tokens total

// Time: 1 API call instead of 4 sequential callsThis achieves both token reduction (no repeated context) and latency reduction (4x fewer round trips).

Progressive Discovery Implementation

For systems with large tool catalogs, implement progressive discovery via meta-tools:

// Initial context: 2 meta-tools (~400 tokens)

const meta_tools = [

{

name: 'describe_tools',

description: 'Discover available tools by category or search',

parameters: { category: 'optional', search: 'optional' },

},

{

name: 'use_tool',

description: 'Execute a specific tool by name',

parameters: { tool_name: 'required', arguments: 'required' },

},

];

// On-demand loading reduces upfront overhead from 10K+ to 400 tokensSession 1 pays discovery cost. Sessions 2+ benefit from cached knowledge, achieving near-MCP-Optimized efficiency.

When to Stick with Code Generation

Despite efficiency advantages of tool-based approaches, code generation remains optimal for:

Rapidly evolving requirements: When task definition changes frequently, development investment in specialized tools doesn't amortize.

Novel one-off analyses: Exploratory work benefits from AI flexibility over structured tool constraints.

Low execution frequency: Tasks running < 20 times don't justify development investment.

Complex conditional logic: Workflows with extensive branching and dynamic execution paths may favor code generation over tool orchestration (though UTCP evidence suggests this needs validation).

The decision isn't "tools versus code"—it's matching architectural pattern to task characteristics and execution profile.

---

Key Takeaways

Architecture trumps protocol. The difference between file-path and data-passing approaches (60K versus 309K tokens) dwarfs the difference between protocols. Focus optimization efforts on data flow design, not protocol selection.

Scalability characteristics diverge. Sub-linear scaling (1.5x) versus super-linear scaling (2.9x) determines whether approaches work at 10,000 rows or fail at 1,000 rows. File-path architectures fundamentally scale better.

Development investment pays off quickly. Break-even occurs after just 11 executions. At production scale (1,000+ executions), ROI exceeds 9,000%. Organizations should optimize even moderately repeated workflows.

Consistency enables production readiness. Low variance (< 5%) approaches support SLA compliance, predictable costs, and reliable capacity planning. High variance (> 15%) approaches complicate production deployment.

Hybrid strategies manage uncertainty. Start with code generation for flexibility. Migrate to optimized tools after validating task stability and execution frequency. This de-risks development investment.

Claims require validation. UTCP code-mode underperformed claims by 40-68% for data analysis tasks. Protocol marketing promises deserve skepticism until validated in your specific domain.

The path forward isn't abandoning AI-assisted development—it's building sustainable architectures that scale. Token efficiency isn't an optimization exercise. It's a foundational design consideration that determines what's practical to automate.

Organizations investing in AI tooling today should prioritize architectural decisions that compound efficiency gains over time. The 5x difference in token consumption between approaches becomes a 50x difference in strategic advantage when multiplied across hundreds of workflows and millions of executions.

Build for efficiency from the start. Your future self—and your CFO—will thank you.

---

Research Methodology: This analysis was conducted using controlled experiments with instrumented network requests capturing full token usage metadata. Raw data with PII redacted is available in the token-usage-metrics repository. All claims are reproducible using open-source tooling.

More to read

Introducing the Agiflow CLI: Scaling AI Agents Across Machines

GitHub Actions was never built for the fast closed loop an agent needs — going back, redoing a step, fixing its own work. Local agent fan-out solved the loop on one laptop and broke on two. The Agiflow CLI is the convenience wrapper we use internally to drive workflow locks, work units, and artifacts through the Agiflow API — so agents on different machines can pull the same backlog without stepping on each other.

8 min readMulti-Agent Orchestration with Claude and Codex: Role Separation, Handoff Contracts, and Verification Gates

Architect multi-agent code systems that stay coherent. Learn role separation patterns, handoff contracts, and verification gates to prevent coordination failures.

18 min readRoadmap to Build Scalable Frontend Applications with AI: Atomic Design System, Token Efficiency, and Design Systems

Learn how to architect frontend applications that scale with AI assistance. Discover how atomic design methodology, component libraries, and design systems dramatically reduce token consumption while ensuring consistent, maintainable codebases.

18 min readPut this project board inside ChatGPT

Open Agiflow in ChatGPT to plan campaigns, create tasks, and check what needs attention. Create a free Agiflow account when you are ready to keep the board for your team.