Optimise Cost and Speed of Agentic Workflow - Reversed Engineering Approach

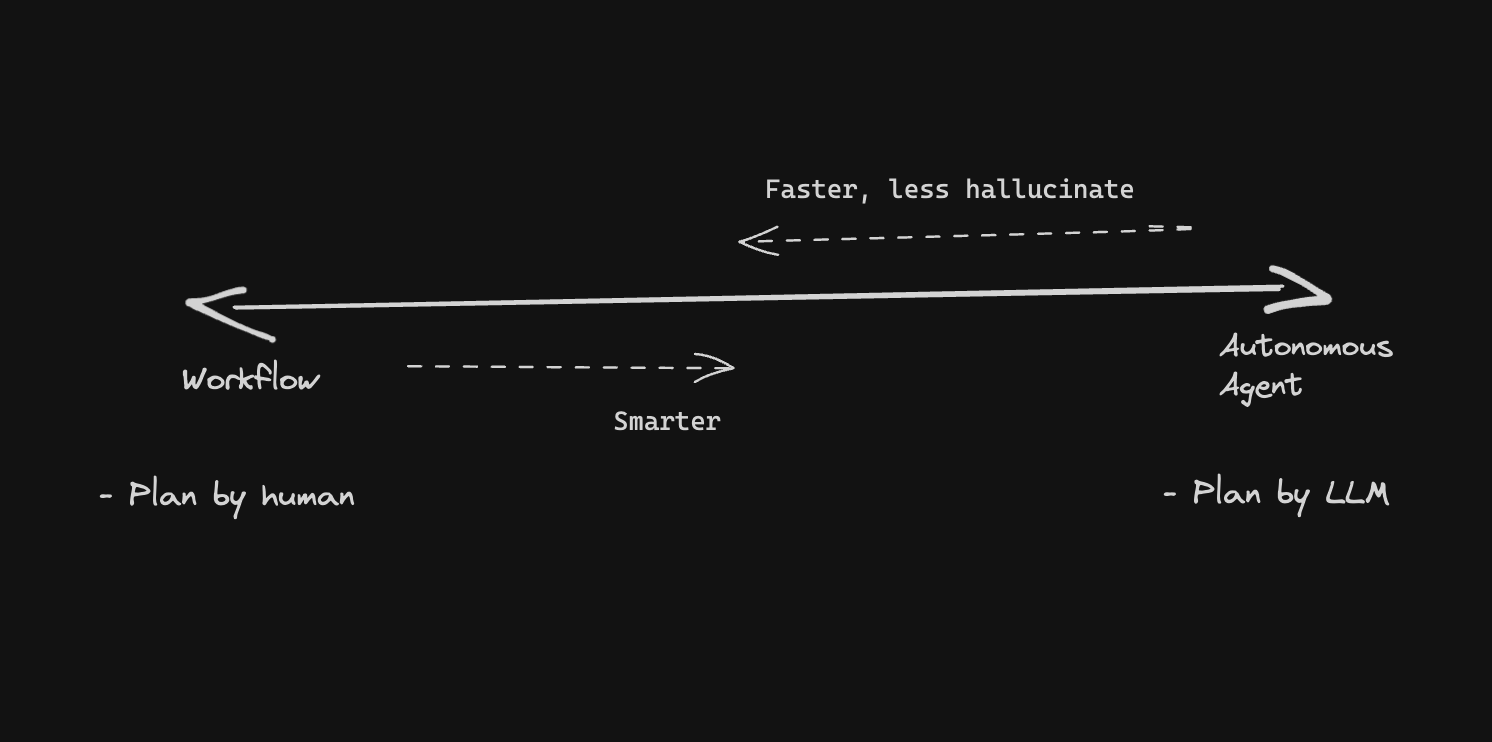

Autonomous Agents show immense potential in solving complex problems, but they can also be brittle, prone to hallucinations, expensive, and slow to run.

Agentic workflows are among the most powerful patterns in modern software. They also have a remarkable ability to run up a $300 bill on a task that should cost $3. The culprit is almost always the same: teams build the happy path first, watch it work, and only look at token counts and latency when they get the invoice.

This article documents a concrete optimization pass on a multi-agent dependency upgrade workflow — moving from ~$2 per run and 3 minutes of wall time down to ~$0.16 and under a minute — and generalizes the techniques that produced those gains.

The Economic Reality of Agentic Loops

The most dangerous trap in agent design is what some call quadratic token growth. LLMs charge for every input token on every turn. A Reflexion-style loop that runs 10 cycles can consume 50 times the tokens of a single linear pass, because each turn re-sends the full accumulated context. A crew of four agents collaborating on a single task routinely uses 3–5× more tokens than one agent handling the same task sequentially, because each handoff carries the full conversation history.

Before optimizing anything, instrument everything. You can't cut what you can't see. OpenTelemetry has become the industry standard for agent tracing — wrapping each LLM call, tool invocation, and retrieval step in a span with gen_ai semantic attributes (model name, token counts, finish reason). Pair it with a backend like Langfuse or LangSmith and you'll immediately see which agent steps are burning the most tokens and which are bottlenecks for latency.

Strategy 1: Flatten the Communication Architecture

The initial version of the dependency upgrade workflow used a bidirectional communication model where agents could query each other, request clarifications, and pass results back upstream. This mirrors how humans collaborate and feels intuitive to build. It is expensive to run.

For automation tasks, bidirectional communication almost always accumulates unnecessary context. Each clarification round trip adds tokens; each backtrack re-sends state that was already processed. The optimization was switching to a strict uni-directional pipeline: each agent receives inputs, does its job, passes structured outputs to the next step, and stops. Think of it like a manufacturing line rather than a meeting.

This change alone cut token usage by roughly 40% because intermediate reasoning no longer circulated back through earlier agents.

Strategy 2: Enable Prompt Caching

Every agent in the workflow carried a system prompt describing its role, constraints, and tool definitions. In the un-optimized version, this static content was re-sent and re-processed on every single LLM call.

Anthropic's prompt caching writes the computed key-value pairs for a prompt prefix to a cache for 5 minutes. Subsequent requests that share the same prefix pay 10% of the normal input token price instead of 100%. For a 10,000-token system prompt sent across 50 agent turns, that's 450,000 tokens charged at the cached rate. OpenAI applies caching automatically; Anthropic requires explicit cache_control annotations in the API call — an easy two-line change that most third-party clients skip by default.

In practice, enabling prompt caching on the system prompts and tool definitions in this workflow reduced input token costs by around 70% on those tokens.

Strategy 3: Route Tasks to Smaller Models

Not all agent steps are equal. The dependency upgrade workflow had five distinct sub-tasks:

- Fetching and parsing the current dependency manifest

- Checking each dependency against the registry for new versions

- Assessing whether a version bump is likely to introduce breaking changes

- Generating the updated manifest

- Writing a summary PR description

The first two are deterministic data tasks with no reasoning required. The fourth is template filling. Only the third and fifth steps genuinely need a frontier model's reasoning capability.

Routing the first, second, and fourth steps to a smaller model (Claude Haiku at $1/M tokens, GPT-4o mini at $0.15/M input tokens) and reserving Sonnet or GPT-4o for the analysis and synthesis steps reduced the average per-run model cost by over 60% with no measurable drop in output quality.

Strategy 4: Parallelize Independent Steps

Sequential execution is the default in most agent frameworks. It is almost never the right choice when steps don't depend on each other.

In the dependency workflow, checking each dependency against the registry was being done one at a time. A project with 40 dependencies took 40 sequential API calls. Parallelizing the registry lookup step with asyncio.gather or equivalent reduced that step from 45 seconds to 4 seconds.

The general rule from LangChain's latency analysis: if four tool calls each take 300ms sequentially (1.2 seconds total), those same calls complete in approximately 300ms when run in parallel. The gain scales linearly with the number of independent calls.

For steps that are strictly sequential but where one outcome is more likely than others, speculative execution is worth considering — starting two branches in parallel and discarding the losing branch once you know which path is correct. The losing branch's tokens are wasted, but the wall-clock time improvement can be worth it for latency-sensitive workflows.

Strategy 5: Control Context Explosion

Agent memory is necessary. Unbounded agent memory is a token incinerator.

The workflow was passing the full conversation history — including intermediate tool outputs, registry API responses, and scratchpad reasoning — into every subsequent agent call. Most of this content was not relevant to the downstream step. A step that needed the final list of version bumps was receiving 8,000 tokens of raw registry JSON alongside it.

The fix is explicit context management: each agent receives only the structured outputs it actually needs, not the history that produced them. Raw tool outputs are summarized or truncated before handoff. Intermediate scratchpad reasoning is dropped after the step completes.

From Google's production multi-agent framework analysis: effective context engineering means deciding what stays and what's removed at each step, not just accumulating everything the system has ever seen. Reducing context size also directly reduces hallucination risk — irrelevant details in a long context window are a known cause of models fixating on the wrong information.

Strategy 6: Design Tools to Minimize LLM Calls

Anthropic's Token-Efficient Tool Use feature reduces the verbosity of tool call outputs by 14–70% without information loss. Beyond that, the number of distinct tools an agent has access to matters. Tool definitions occupy prompt tokens. An agent with 30 tools available is paying for 30 tool descriptions on every call, even if only 2 are relevant to the current step.

The workflow was refactored to filter the available tool set per step — the registry lookup step only had registry tools; the analysis step only had analysis tools. This reduced average prompt size by around 800 tokens per call, which compounds quickly across a multi-step workflow.

Meta-tools — bundling multiple sequential actions into a single tool call — can further reduce total LLM invocations. Research from the "Optimizing Agentic Workflows using Meta-tools" paper shows meta-tools reduce the number of LLM calls by up to 11.9% while increasing task success rate, because fewer reasoning steps means fewer opportunities for the agent to drift off course.

The Results

After applying these optimizations systematically:

| Metric | Before | After |

|---|---|---|

| Cost per run | ~$2.00 | ~$0.16 |

| Wall-clock time | ~3 minutes | ~1 minute |

| Token reduction | — | ~88% |

Making It Production-Ready

Cost and speed are necessary but not sufficient for production. Three additional concerns require attention:

Evaluation. An agent that is fast and cheap but wrong is worse than a slow expensive one. Use statistical checks (schema validation, format assertions) for deterministic outputs and model-based judges for qualitative ones. Define a golden dataset of input/output pairs for your critical flows and run regressions before every deploy.

Observability. Every optimization decision in this article was informed by traces. Deploy OpenTelemetry instrumentation from the start — token counts, latency per step, tool call success rates — so you can see exactly where the next bottleneck is without guessing.

Human oversight. For high-stakes steps (merging a PR, deleting files, sending an external request), insert a human approval gate. The agent prepares the action; a human confirms it. This doesn't add significant latency to the happy path and prevents the class of costly mistakes that no amount of evaluation catches reliably.

Agentic systems are powerful enough to be worth building well. The optimization ceiling is higher than most teams expect: with prompt caching, model routing, parallelization, and disciplined context management, 70–90% cost reductions are routinely achievable without compromising output quality.

Full source code for the dependency upgrade workflow is available on GitHub.

More to read

Introducing the Agiflow CLI: Scaling AI Agents Across Machines

GitHub Actions was never built for the fast closed loop an agent needs — going back, redoing a step, fixing its own work. Local agent fan-out solved the loop on one laptop and broke on two. The Agiflow CLI is the convenience wrapper we use internally to drive workflow locks, work units, and artifacts through the Agiflow API — so agents on different machines can pull the same backlog without stepping on each other.

8 min readMulti-Agent Orchestration with Claude and Codex: Role Separation, Handoff Contracts, and Verification Gates

Architect multi-agent code systems that stay coherent. Learn role separation patterns, handoff contracts, and verification gates to prevent coordination failures.

18 min readRoadmap to Build Scalable Frontend Applications with AI: Atomic Design System, Token Efficiency, and Design Systems

Learn how to architect frontend applications that scale with AI assistance. Discover how atomic design methodology, component libraries, and design systems dramatically reduce token consumption while ensuring consistent, maintainable codebases.

18 min read